A2RL Season 2 @ UMEX 2026

Joel Klimont,

The second season of the A2RL (AbuDhabi autonomous drone race) took place at the UMEX conference in Abu Dhabi from 20–22 January 2026. Compared to many robotics competitions, the rules of A2RL are exceptionally strict and therefore unusually fair: every team is equipped with exactly the same drone provided by the organizer. This removes hardware advantages entirely and shifts the focus to what ultimately decides performance in autonomy, namely perception, stateestimation, and control software that remains reliable under real-world constraints. The sensor setup is intentionally minimal. For onboard sensing, we only had the IMU on the flight controller and a single IMX219 rolling-shutter camera. There is no depth sensor, no external tracking, and no additional cameras. The entire pipeline must infer the drone’s motion and the location of the racing gates from these limited signals while flying at speeds that quickly expose even small estimation errors.

Team FlyBy’s core members are Joel Klimont (PhD student under Prof. Radu Grosu at TU Wien, Cyber-Physical Systems Group), Alexander Lampalzer (Master’s student at TU Wien), and Jakob Buchsteiner (Master’s student at TU Wien). We were additionally supported by Konstantin Lampalzer (Master’s student at TU Wien), Thisas Ranhiru (Bachelor’s student at RIT Dubai), and Akos Papp (student at HTL Wiener Neustadt and member of the robo4you robotics club).

Figure: Reaching speeds of 17m/s on the training track.

Qualification: October and November

Before we could participate at UMEX, we first had to qualify through two rounds. The first stage was a two-week qualification in October, followed by a second three-week qualification and training phase in November. We passed both rounds comfortably and completed the first stage on the first day of testing, which gave us valuable time to systematically improve our stack instead of merely aiming for the minimum requirements. That additional time mattered. In October, we were typically flying at speeds of about 2–3 m/s. By the end of November, after extensive tuning and repeated evaluation cycles, we were already pushing towards 17 m/s. This increase was not just a matter of increasing speed; it required improving robustness across the entire pipeline, because faster flight reduces the margin for perception delays and state-estimation drift.

Figure: Our drones and tools in the pit area.

Perception and Timing Under Severe Constraints

At the core of A2RL is a perception-andcontrol loop that must run reliably at high speed. The main perception task is detecting the racing gates from the onboard camera in a way that remains stable across lighting changes, motion blur, and rolling-shutter artifacts. For this, we trained a network that detects the gates in the image stream, and we then designed a filter that fuses these visual updates with the IMU data.

Our vision model runs onboard with a latency of roughly 5 ms per inference at a resolution of 820×616. We operate the camera and processing pipeline at 120 fps, which is essential to keep the delay between sensing and actuation small enough for high-speed flight. To reduce motion blur, our camera uses an exposure time of only 3 ms. This makes fast flight feasible, but it also introduces some new issues: the local 50 Hz power grid in Abu Dhabi can lead to visible flicker in the scene from some lamps, and with short exposure times that flicker can appear in the images. In practice, this becomes another source of perception noise that must be handled by the gate detector and filter.

Precise timing and synchronization become increasingly important as the drones speed increases. To improve the time alignment between camera frames, IMU messages, and our estimator updates, we implemented our own extensions on the flight controller firmware for improved time synchronization and for batching IMU data. With this setup, we can access IMU data at up to 1 kHz, which corresponds to the maximum rate of the MPU6000. Operating at these high data rates helps bridge the periods between reliable visual updates and improves estimator stability in fast sections of the track.

Figure: More testing at the event location.

Control: MPC as a Baseline

For control, we used a model predictive control (MPC) approach until the end of November. The rationale was that MPC provides a structured way to track trajectories and it allows systematic tuning as the vehicle dynamics and estimation characteristics become better understood. Over time, as the speed targets increased, we reached a point where reliable tracking required increasingly careful tuning and where small state-estimation imperfections started to strongly affect controllers performance.

Returning to Abu Dhabi: Final Preparations

For the final event, only the top six teams out of fourteen that attempted qualification remained. One week before the race week, we returned to Abu Dhabi for additional testing and tuning on site, while continuing development work in parallel. Over December, all teams continued to improve their systems at home, and the overall performance level increased noticeably by the time we arrived.

We also came with new ideas, but it was initially unclear whether we would be able to deploy them in time and use them in our software stack. In particular, we were seeing the limit of our state-estimation at higher speeds: the faster we flew, the more the system had to cope with longer segments without strong visual corrections, and the more sensitive it became to small inconsistencies between the real drone and our model for the MPC.

After intensive development and repeated verification, we managed to bring a new stateestimation approach online within the first days of the week. The improvement in consistency and stability was immediately visible in our internal metrics and in live tracking, but it also meant that the bottleneck shifted to the controller, which now had to follow significantly more aggressive trajectories without losing stability.

In practice, pushing our MPC controller to reliably track these faster trajectories turned out to be significantly more difficult than expected. The controller exhibited different failure modes depending on desired speed and trajectory shape. While our remaining on-site testing time was limited, strong teams such as KAIST, who had similar performance to us in November, continued to improve their speed through persistent tuning. This made it clear that we needed an approach that would allow us to improve within days rather than weeks.

A Late Pivot to Reinforcement Learning

During December, we had started working on an experimental reinforcement learning (RL) controller that was originally intended for the next season, with the long-term goal of replacing MPC. Because state-estimation had become our primary focus in the weeks before the event, we had little time to test RL on real hardware, and the controller was not integrated into our software stack running onboard the drones.

One of the early versions of this RL project was used in the course Stochastic Foundations of Cyber Physical Systems which we teach, where it served as the students’ final project. However, starting from something that could fly in a simulation, to deploying a trained agent in the real world under completely different constraints, was still a long stretch.

A opportunity arose when the racing gates were moved from the testing location to the UMEX event track at ADNEC, leaving us a weekend without test time. We used that time to continue development and train the first policies that looked promising.

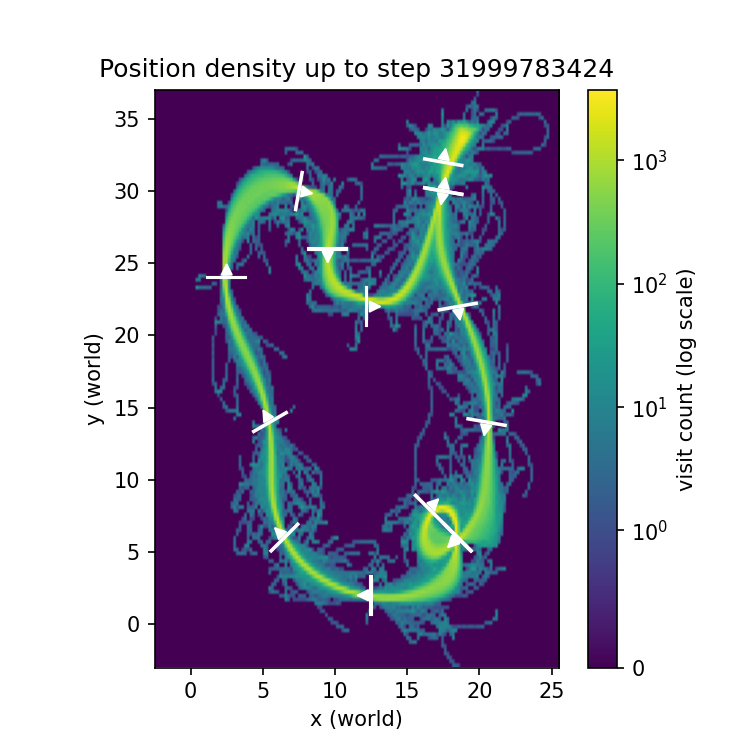

Our reinforcement learning workflow consists of two separate steps. First, we train policies offline to obtain the neural network weights. This training runs in a simplified environment so that we can iterate quickly, and because it is lightweight we can run many rollouts in parallel on the GPU. Second, we take the trained weights and load them into an inference node in our software stack, which runs either on the real drone or in our more realistic simulation. This inference node receives the observation computed by our state-estimation pipeline, runs it through the policy network to produce an action, and then sends that action to the flight controller.

Initially, these two stages did not align: the policies looked strong during training, but when we deployed the same weights through our inference node, using estimator-generated observations, the behavior became unstable in both realistic simulation and on the real drone, repeatedly failing around the fourth gate. The cause was a bug in the production inference node where part of the observation was constructed incorrectly. The gyro readings expected by the policy were effectively always zero because they were not forwarded through our estimator interface. After fixing the observation bug, the same trained weights immediately produced stable behavior in deployment.

First Stable Runs and Policy Iteration

Figure: Event location at ADNEC during the UMEX conference.

With the bug fixed, we used our last Monday testing slot at around 2am to validate the updated controller. The drone immediately completed three laps, before being manually stopped, and held a stable target speed of approximately 12 m/s across the full track. Beyond the raw speed, what mattered most was that the RL policy remained stable in track segments where MPC had struggled with trajectory tracking, and it appeared more robust to state corrections which occurred after longer stretches without vision updates.

This mean we could proceed with systematic policy iteration. At that point, our best MPC time was 9.4 seconds per lap. The first stable 12 m/s RL policy was still slower in lap time, around 11 seconds, so our focus shifted to training faster policies while keeping enough robustness to finish consistently.

Tuesday: Building a Portfolio of Policies

Tuesday was our last full day to test and select policies before competition days onWednesday and Thursday. We organized our agents into families according to training parameters and intended speed targets, and we evaluated them not only by their expected lap time but also by how well they tracked their racing line and how well their flight style aligned with what our state-estimation could support in the real environment.

We found that speed alone does not determine performance. Some policies learn more efficient racing lines and smoother gate approaches, which can reduce estimator stress and improve overall reliability. By the end of the day, we had a portfolio ranging from slower and safer policies to faster and riskier ones, which occasionally crashed due fast maneuvers close to the gate corners. During that day, one RL agent achieved a lap time of 8.6 seconds, already beating our MPC baseline by almost a second.

Each agent typically took about 25 minutes until we could see whether it was learning a viable racing line. After about 40 minutes we could observe the first promising fast runs in simulation. Finally after roughly 1.5 hours of training we usually had a policy that was ready for real-world deployment. For slower policies, training was done on an RTX 4070 laptop GPU; for faster policies, which require longer training, we used an RTX 4090 at a remote cluster from robo4you.

Figure: Evaluating preliminary training results in the simulator.

Wednesday: Speed Racing

the time-trial discipline of A2RL, where each team flies two continuous laps as fast as possible. We first deployed our safer 12 m/s policy to secure a clean time and appear on the leaderboard, and then we continued with faster agents as. During these runs, we achieved an 8.0 second lap with a policy that reached around 17 m/s, providing a strong basis for the multi-drone competition.

Figure: Debug information about the raceline the agent learns at the end of training.

Multi-Drone Racing: The Silver Group

The qualifying placed us in the Silver group (teams 4–6) together with KAIST and CVAR UPM, both of which were using MPC. We knew KAIST had demonstrated faster lap times, but we also observed frequent crashes. CVAR, while slower, was remarkably consistent and therefore collected points by finishing reliably, which can be decisive in group-based point scoring.

We began with a stable RL policy, prioritizing collision free finishes and predictable behavior while racing with other drones on the track. Across the first five races we collected 15 out of 25 points, finishing first three times. In one race, a collision with another drone resulted in the other team being knocked out, while our RL policy continued and our stateestimation remained stable even after the impact occured.

As the competition progressed, it became clear that KAIST was steadily improving and that our lead, while strong, was not completely safe. We therefore decided to deploy a newly trained, higher-speed policy that showed excellent metrics in training. Because the time between races was only a few minutes, we did not have the opportunity to manually validate the agent in the simulator, and instead relied on the automatic evaluation results from our training pipeline.

The estimated lap time was 7.3 seconds, with peak speeds near 20 m/s on the straights. From previous experience we knew that realworld lap times are typically 300–500 ms slower than the automatic simulation estimate, but even with that margin the policy was expected to be significantly faster than anything we had deployed before.

The start signal in A2RL is given by beeps, but the actual “go” still depends on human reaction time, and with very little sleep at that point we had not been getting the best starts throughout the competition. This time, however, we managed to launch almost simultaneously and passed the first gate narrowly ahead. Over the first lap we built a lead, and during a complex maneuver near the end of the lap, KAIST crashed and could not recover. CVAR remained in the race and continued to apply pressure through consistency, but our policy flew the remainder cleanly and recorded a lap time of 7.8 seconds. This not only beat our MPC baseline by almost two seconds, but also set a new personal record for us.

With this result, our point lead became large enough that we secured first place in the Silver group. After months of development, two qualification stages, and an intense final week of testing, integration, and training, the race concluded with a result that reflected all the work we had put into our software.

Conclusion

A2RL is designed to be a pure software competition: every team flies the same drone with the same limited sensors, and results are determined by how robustly perception, state estimation, and control work together at high speed. For us, the season developed from passing qualification and steadily increasing performance, to a state-estimation upgrade shortly before race week, and finally to a late transition from MPC to reinforcement learning, which ultimately gave us the consistency and speed needed to win our Silver group in multidrone racing.

Acknowledgements

We thank Prof. Radu Grosu for his continuous support throughout the project, and Dr. Michael Stifter and the robotics club robo4you for their guidance and help. We also thank HTL Wiener Neustadt for letting us test in their sports hall on weekends, which made a major difference during intensive development phases. Finally, we thank Posedio for sponsoring and supporting our work.

Figure: Drones used in the competition.

Figure: https://x.com/AutonomousAD/status/2014661236374618379